The highest certification level of CWNP is the CWNE. CWNP certifications are vendor neutral, which means they are about wireless networking and specifically about Wi-Fi on a general level. Cisco CCIE Wireless and Aruba ACMX are also high level certifications, but they are about a single vendor’s products and solutions. The world is much more diversified.

CWNP

Certified Wireless Network Professionals is an U.S. based organization, which has been developing a certification program for Wi-Fi/802.11/WLAN technology since 1999. The fundamental idea was to be vendor-neutral. IEEE 802.11 standard applies to all – as do the laws of physics, governing radio waves and their behavior. If you know the Wi-Fi fundamentals applying the knowledge to any vendor’s solution is fairly straightforward.

The entry level CWNP certifications are the CWS and CWT (Certified Wireless Specialist and Technician). The former is for non-technical personnel like salespersons and the latter is somewhat more technical and is intended for installers, for example. These two used to be a single certification known as CWTS. No entry level certification is required for the higher levels.

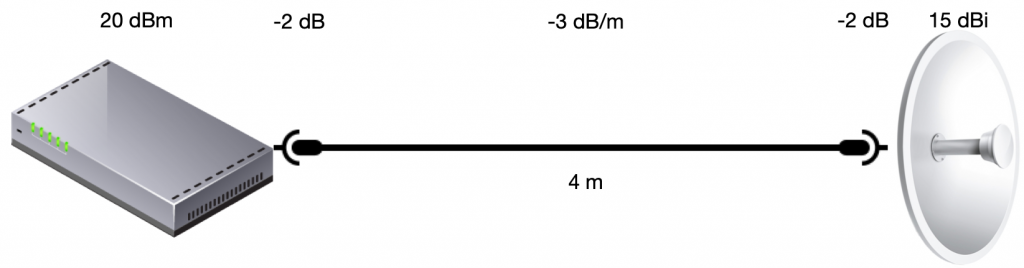

Certified Wireless Network Administrator or CWNA is more advanced. It requires thorough understanding of radio frequency physics, radio waves, antennas and software side like encryption and authentication. CWNA is an excellent way of finding out where you stand in the Wi-Fi crowd. From a Finnish standpoint outdoor antennas and microwave links are a lot less important than they appear in CWNA. On the other hand, they are only covered in CWNA.

After CWNA comes three professional level certifications: Design Pro, Security Pro and Analysis Pro (CWDP, CWSP and CWAP). Each one of these expands on subjects already covered in CWNA. Design Pro is about designing wireless networks, Security Pro is about data protection: encryption and authentication, while Analysis Pro is about protocol details, network packet contents and troubleshooting.

CWNE is different. There are no tests or classes to attend. CWNA, CWDP, CWSP and CWAP are prerequisites. In addition you need to have three years of full-time field experience, other supporting certifications, documented Wi-Fi projects and endorsers. CWNE doesn’t cost anything, you can’t buy it. You can apply for CWNE status and CWNP will grant it if they deem you worthy. The CWNE program started in 2001 and as of writing there are 307 certifications. In recent years about 50 certifications have been granted annually worldwide.

My Path

I have been a full time IT consultant since 1980’s. The first networks were AppleTalk and telecommunication was accomplished with modems. I came across TCP/IP on Unix systems already in the 80’s, but the Internet made TCP/IP ubiquitous in the 90’s.

I remember having seen my first Wi-Fi access points around the turn of the millennium. I did install some early 802.11b access points, but they were curiosity items and didn’t see much real use. Slowly APs became commonplace and the number of users increased.

Some Wi-Fi networks didn’t work as expected and I was often asked to troubleshoot. Unfortunately I couldn’t do much beyond checking the configuration. I tried to find someone who was skilled to troubleshoot Wi-Fi but I never found anyone. Nobody wanted to admit anything, but I saw shaking heads and shrugs. I, too, started to believe Wi-Fi was impossible to understand, you could only hope it worked.

After 2010 Wi-Fi became a necessity. Smartphones and tablets increased the demand for wireless connectivity. The users wanted to use their laptops wirelessly, too. 802.11n provided the capacity, but not all networks performed the same. I still couldn’t find anyone to help, so I decided to dig into it myself. If it was designed by humans it had to be comprehensible for humans.

I read books, tried out products from different vendors and set up labs for different scenarios. I still was uneasy wether I was doing the right thing. Then I came across CWNP. In 2015 in took the CWTS test without any preparation. I passed at 50/60, which has been my worst score so far. I knew next to nothing about outdoor Wi-Fi, microwave links and special antennas. All my experience was based on indoor office networks. On the other hand, I knew them, I was on the right track. And now I had found a learning path to follow.

For all my CWNP certifications I have been reading books by myself. There have been only occasional CWNP classes in Finland. On the other hand, I don’t believe a single week can prepare you for a test. At least I have studied for months, but not full time of course. On the side I have acquired vendor specific certifications like Cisco, Ubiquiti, MikroTik, Aruba and LigoWave. All the vendors must adhere to the same laws of physics and the 802.11 standard so there is more in common in the solutions than there are differences. Naturally the products all look different and the level of configuration control differs.

A common question is “Which order is best for the Pro-exams?” There is no set answer. The only requirement is that you must have passed all of them and they must be valid at the time of application. I did Security Pro first, because I have a strong security background. Key exchanges, encryption methods, handshakes and PKI are familiar to me. I took the Analysis Pro next and Design Pro last. With hindsight I would suggest Design Pro first, because I found it the easiest and most applicable to general Wi-Fi work. Take Security Pro second to save Analysis Pro last. AP last because it is the toughest and you don’t want it to expire in case you take more than three years to pass them all. They also overlap somewhat, so by taking DP and SP first you have already prepared for some of the AP material.

From the books I have read I can recommend the CWNP series by Sybex. CWNP used to have a deal with Sybex to publish books aligned with the certifications. The exams are updated every three years and Sybex would produce a new edition to cover the latest exam. This deal doesn’t exist any longer and CWNP is publishing their own “official” study guides. The quality of these books hasn’t been impressive. Fortunately Sybex has continued updating their series. Just make certain the book covers the latest version of the exam before buying. Even if you don’t plan to certify, the recently renewed CWNA is an excellent handbook that covers Wi-Fi thoroughly.